Janitor Bot

Description as a Tweet:

A small robot that identifies and removes trash. Through the use of computer vision, it can distinguish between trash and non-trash items, and keeps track of what trash it finds in a google sheet.

Inspiration:

We wanted to try our hand at a hardware hack, and were interested in the Google Vision API. Based on those interests, we worked to identify a project with practical utility and landed on the idea of a janitor robot that could tidy up large public spaces. The large flat areas provide an ideal environment for a wheeled robot, and the logging feature is particularly useful in a large space where those administering the space may be interested in the types and amounts of litter left around.

What it does:

Janitor Bot wanders around looking for trash. When a piece of trash, identified using Google's Vision API, comes into its field of vision, it will approach the trash and put it in its scoop. Then, it will take the trash to a predetermined receptacle. It will also record an image of the trash, the type of trash found, and a time stamp to a google sheet for easy viewing/analysis by a lay audience.

How we built it:

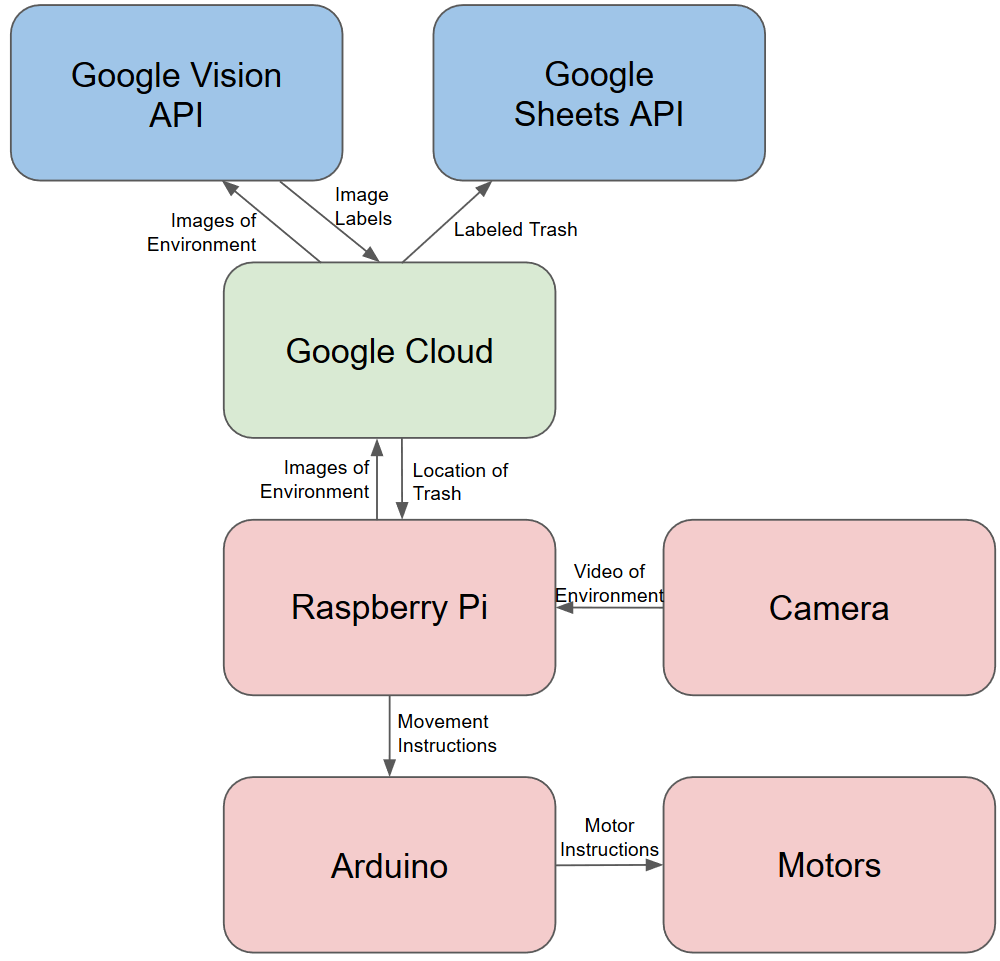

We used a Raspberry Pi as the core of our robot, adding motors and a motor shield to allow for it to move and a camera for it to see. We used a google cloud instance to run the Vision API to determine the contents of images we get from the Pi's camera and also to run the logging procedure once trash is found. The physical scoop used to pick up the trash was designed by one of our team-members and 3D printed.

One of our team members focused on the physical components of the robot, two others wrote code for the Raspberry Pi and its peripherals, and the last two worked on the google cloud components.

Technologies we used:

- Python

- Flask

- Arduino

- Raspberry Pi

- Robotics

Challenges we ran into:

This was the first hardware hack that any of us have done, so we had to figure out how to use all of the components. We were originally using an Arduino as an interface between the Raspberry Pi and the motors, which required learning yet another set of information, but this was eventually found to be unworkable despite some significant advantages against the Pi by itself.

All the APIs we are using are new to us as well, so everyone had to pick up some new skills. We also realized that the Google Sheets API we used for logging didn't allow for direct image uploads, so we had to brew up a workaround involving a separate image host.

Getting a working scoop also proved challenging, as again none of us had used a 3D printer before. We had to go through a number of iterations in order to get it down to a size that could finish printing in a reasonable time while maintaining functionality.

Accomplishments we're proud of:

We are most proud of the simple fact that all of the many systems involved in our project work and work together. In particular, successfully setting up the Raspberry Pi directly connected to the motor shield was something quite challenging that really ended up working out.

What we've learned:

As alluded to earlier, we all learned quite a bit. We learned how to use a Raspberry Pi, motors, cameras, Google cloud, 3D printers and various APIs. We also, I think, gained an appreciation for how hard robotics can be.

What's next:

In order to be used in a practical setting, we would first and foremost need a real casing around all of the exposed electronics. More interestingly, we originally intended to have an articulated and powered scoop which would allow larger objects of more varying sizes to be collected by the robot. If we had more time, this would likely be our first major improvement. The robot's movement could also be streamlined to increase the speed with which it performs its job.

Built with:

We used a Raspberry Pi, motors, a motor shield, a camera, a chassis from a kit, and a 3D-printed scoop as our physical components. We use a little bit of C for the layer closest to the motors and Python for the rest of our stack, including Google's vision and Sheets APIs.

Prizes we're going for:

- Best Hardware Hack

- Best Documentation

- Best Use of Google Cloud

Team Members

Benjamin Johnson-Staub

Mitchell Burns

Kelly Britton